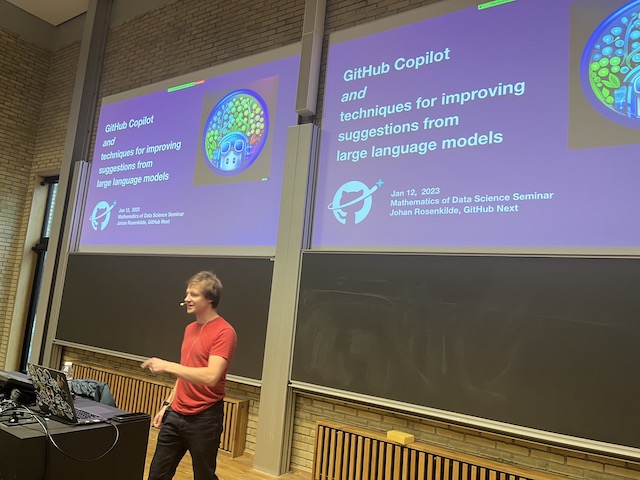

GitHub Copilot and techniques for improving code suggestions from large language models

Johan Rosenkilde, GitHub Next

12 January 2023

Progress in large language models (LLMs) has been very rapid the last decade and LLMs are now being used to power a growing number of real-life applications. The automatic code completion tool GitHub Copilot is one such, powered by OpenAI’s Codex model. Training an LLM from scratch is very GPU intensive, and so only a handful of companies currently dominate the state-of-the-art like Google, Meta, Amazon, and OpenAI. Academia therefore has a challenge in operating in this space. There is a neighbouring arena of research, however, which seems just as important for LLMs to be impactful, and which is much more low-cost, namely: given a specific LLM and a specific task, what can you do to improve the performance in solving that task? In developing GitHub Copilot, we were faced with exactly this challenge, and so will every single company that launches real-life applications powered by LLMs. I believe there is a great potential for academic discoveries in this area, being relatively unexplored while potentially highly impactful.

In this talk I will discuss a number of techniques that we have explored for making GitHub Copilot better without actually changing the model, as well as our “offline” evaluation framework that we use for early experiments without involving users. Much of this will be specific to the application of code completion, but it is my hope that it may inspire using related techniques on other applications of LLMs.

About the speaker

Johan Rosenkilde is a research engineer at GitHub Next, a small team within GitHub that explores the future of software development. He co-created GitHub Copilot, which is the leading ML-powered tool for providing code suggestion directly in the developer’s IDE. Until 2021, he was an Associate Professor at DTU specialising in computational algebra and error-correcting codes. He holds an MSc in software technology and a PhD in mathematics, both from DTU.